See What Others Cannot

The world's largest range-tagged LWIR dataset, integrated laser rangefinder sensing, event-based neuromorphic cameras, and AI models purpose-built for thermal — across every domain from automotive to military.

The World's Largest Range-Tagged LWIR Dataset

Every object annotated with laser rangefinder ground-truth distance. Not estimated depth — real measured range. This is the foundation that makes our AI fundamentally different.

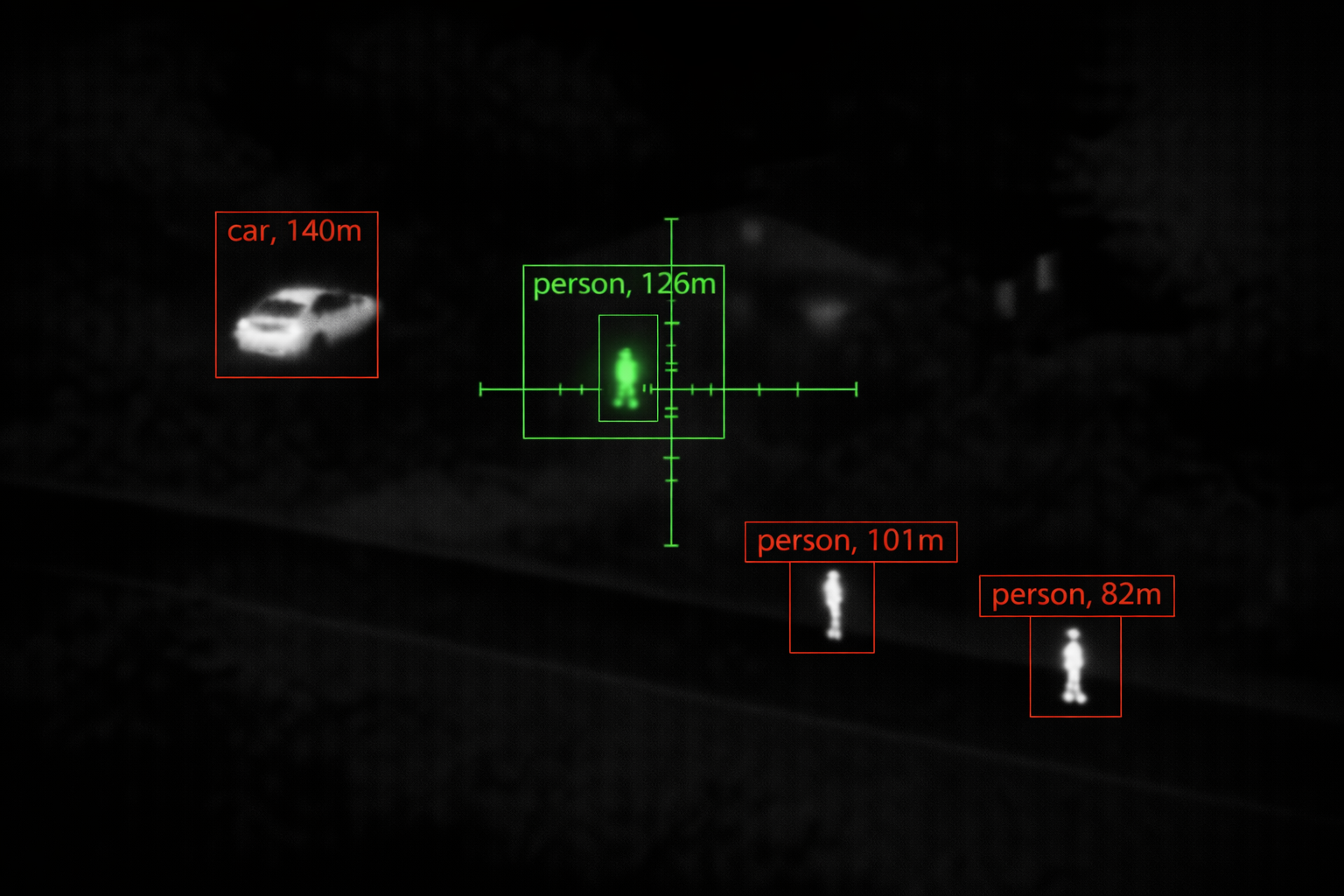

Live CVE output with OPT-AI detection and integrated LRF ranging. Every object classified and range-tagged in real time.

Why Range Changes Everything

Standard thermal AI sees a hot blob and guesses what it is. Our AI sees a hot blob and knows what it is, how far away it is, how big it actually is, and whether it matters at that distance. The difference is the dataset it was trained on.

Every frame in our dataset is captured with an integrated laser rangefinder measuring precise distance to each annotated object. A person at 50 metres has a completely different thermal signature than a person at 500 metres — different pixel count, different contrast against background, different atmospheric attenuation. Our models learn these range-dependent characteristics because they were trained on real range-tagged data, not synthetic augmentations.

This enables capabilities that no RGB-trained or depth-estimated model can match:

- Range-gated detection — ignore objects beyond a threshold distance, eliminate background clutter

- Size-at-range estimation — distinguish a person at 200m from a vehicle at 2km by thermal signature alone

- Distance-aware classification — model accuracy doesn't degrade at long range because it was trained at long range

- Ballistic computation — LRF distance feeds directly into firing solutions for weapon sights

- False positive suppression — range context eliminates >60% of false detections in cluttered scenes

Dataset Characteristics

- LRF Ground-Truth Range Every annotated object carries laser-measured distance — not monocular depth estimation, not stereo disparity, not LiDAR point clouds. Real single-beam LRF range, the same sensor your deployed system uses.

- Multi-Environment Capture Urban, rural, forest, desert, maritime, arctic. Day, night, dawn, dusk. Rain, fog, snow, dust, smoke. All four seasons. The model sees everything it will encounter in the field.

- Engagement-Range Coverage Annotated objects from 5 metres to beyond 3 kilometres. Close-range detail and long-range signature are both present and labelled — the model never extrapolates.

- Continuous Growth The dataset grows with every deployed system. Field data feeds back into the training pipeline, improving model accuracy for specific operational environments and emerging threat types.

Try Our Detection Engine

Upload an LWIR thermal image and watch our YOLO model detect and classify every object in the scene — running real neural inference directly in your browser.

This demo runs a lightweight model (v1, 15 classes) optimized for browser-based WebAssembly inference. Production deployments use higher-capacity models with extended class libraries, LRF range data, and GPU-accelerated inference. Contact us to evaluate the full system.

Drag & drop an LWIR thermal image here

or click to browse — PNG, JPG supported

Purpose-Built for Every Domain

Hundreds of object classes organized into application-specific libraries. Each domain trained on real thermal data captured in real operational conditions with real LRF range.

Automotive & ADAS

Pedestrians, cyclists, motorcycles, cars, trucks, buses, construction vehicles, animals on roadway, road infrastructure. Captured on highways, urban streets, rural roads, parking facilities. All weather and lighting conditions including headlight glare, rain reflection, and fog.

- Pedestrian detection to 250m+

- Cyclist and motorcycle discrimination

- Animal-on-road alerting (deer, dog, livestock)

- Fog, rain, and night scenarios

Hunting & Wildlife

Deer (whitetail, mule, red), elk, moose, wild boar, bear, coyote, wolf, turkey, feral hog. Thermal signatures in dense foliage, open fields, ridgelines, and waterways. Dusk and dawn activity patterns, bedded versus standing postures, partial occlusion behind vegetation.

- Species-level classification in thermal

- Posture detection (standing, bedded, moving)

- Partial occlusion handling in foliage

- Range-to-target with LRF integration

Military & Defense

Dismounted personnel, light vehicles (technical, MRAP), heavy armour (MBT, IFV), rotary and fixed-wing aircraft, small UAS/drones, concealed positions, muzzle flash, IED indicators. Multi-terrain: urban, woodland, desert, littoral. Engagement ranges from CQB to 3km+.

- Combatant vs. civilian discrimination

- Vehicle type classification at range

- Concealed and camouflaged target detection

- Small UAS tracking and classification

Industrial & Infrastructure

Overheating electrical connections, bearing failures, steam and gas leaks, insulation defects, personnel in restricted zones, PPE compliance. Captured in substations, refineries, manufacturing plants, and data centres. Includes normal baseline and fault-condition pairs for anomaly training.

- Fault vs. normal thermal signature pairs

- Gas plume visualization classes

- Personnel zone-intrusion detection

- Temperature-calibrated annotations

Integrated Laser Rangefinder

Every thermal frame paired with real-time laser-measured distance. Not an accessory — a core part of the sensing architecture.

From Detection to Decision

A thermal camera tells you something is there. A laser rangefinder tells you how far. When both are fused at the sensor level, the system moves from passive detection to active decision support.

In a weapon sight, LRF distance feeds directly into the ballistic computer — the reticle shifts to show the correct holdover for that exact range, compensating for bullet drop, wind, and target motion. The shooter sees one aiming point, not a mental calculation.

In a drone, LRF provides slant range to target for accurate geolocation — converting a pixel on a thermal image into GPS coordinates on a map. In an ADAS system, LRF confirms that the detected pedestrian is 80 metres ahead, not a reflection 500 metres away.

In our AI pipeline, LRF is what turns a 2D thermal dataset into a 3D understanding of the scene. Every training sample carries real distance, so the deployed model inherits range awareness without needing a rangefinder at inference time — though having one makes it even better.

LRF Capabilities

- Real-Time Range Overlay Distance to crosshair centre displayed on every frame. Updated at up to 10Hz for moving targets. Integrated into the display compositor alongside thermal video and AI overlay.

- Ballistic Computation Measured range + atmospheric data + weapon profile = automatic holdover and windage. Displayed as a shifted aiming point or BDC reticle. Supports multiple ammunition profiles stored in memory.

- Lead Angle for Moving Targets OPT-AI tracks target velocity vector. Combined with LRF range and ballistic profile, the system computes and displays the correct lead angle for an engagement on a moving target.

- Range-Gated AI Filtering Set a maximum engagement range. AI only reports detections within that range — everything beyond is suppressed. Eliminates background clutter and reduces operator cognitive load in dense scenes.

- Target Geolocation Thermal bearing + LRF slant range + GPS + IMU = target GPS coordinates. One button press to generate a 10-digit grid reference for artillery, CAS, or ISR handoff.

- Dataset Ground-Truth Every LRF measurement in the field is logged alongside the thermal frame and AI detections. This data feeds back into the training pipeline, continuously improving range-aware model accuracy.

Event-Based Neuromorphic Sensing

Frame-based cameras capture everything at fixed intervals. Event cameras capture only change — at microsecond resolution. Combined with LWIR, they create a sensing system with no blind spots in time.

Microsecond Temporal Resolution

Each pixel independently reports brightness changes as they happen — no fixed frame rate, no shutter. Fast-moving objects that blur in conventional frames are captured with perfect clarity. Ideal for muzzle flash detection, projectile tracking, and fast-slewing gimbal compensation.

120+ dB Dynamic Range

Event cameras handle extreme contrast without saturation or loss. A bright explosion next to a dim thermal signature — both captured simultaneously. No HDR compositing, no tone mapping, no lost detail. Critical for scenes mixing fire, flares, or sunlight with subtle thermal targets.

LWIR + Event Fusion

Conventional LWIR provides absolute thermal context — temperature, classification, NUC-corrected imagery. Event camera provides change detection at microsecond scale. Fused together: the LWIR tells you what is there, the event camera tells you what just moved. Two modalities, one fused perception.

Ultra-Low Bandwidth

Event cameras output only changes — in a static scene, data rate drops to near zero. Bandwidth scales with scene activity, not resolution or frame rate. Enables always-on surveillance with minimal power, processing, and storage. Ideal for battery-operated or bandwidth-constrained deployments.

What Event Sensing Catches That Frames Miss

A conventional 50Hz thermal camera captures 50 snapshots per second. Between each snapshot, 20 milliseconds pass unobserved. An event camera has no gaps — it observes continuously at microsecond granularity.

In those 20-millisecond gaps:

- Muzzle flash — lasts 1-3ms, often entirely between frames. Event camera captures it with precise timing and location.

- Projectile trail — supersonic rounds cross the field of view in under a frame. Event camera records the trajectory as a continuous stream of pixel events.

- Fast drone transit — a small UAS at 100m/s crosses a narrow FOV in one frame. Event camera tracks it continuously without motion blur.

- Laser spot flicker — pulsed laser designators blink at kHz rates. Event camera detects every pulse; frame camera sees nothing between frames.

- Vibration compensation — vehicle-mounted systems experience continuous vibration. Event camera detects and compensates for micro-movements faster than any mechanical stabiliser.

Deployment Scenarios

- Counter-UAS Detect and track small, fast-moving drones that conventional cameras lose between frames. Event camera provides continuous trajectory for interception cueing.

- Sniper Detection Muzzle flash captured with microsecond precision, geolocated against the thermal background. Direction and range to shooter computed before the sound arrives.

- High-Speed Inspection Production lines moving too fast for frame cameras. Event camera detects defects, vibrations, and anomalies at the speed of the process itself.

- Perimeter Surveillance Static scene = zero data. Movement = instant alert. Power consumption proportional to threat activity, not camera resolution. Run for weeks on battery.

Build With the Best Thermal AI

Our dataset, our models, your product. License the trained models, integrate the CVE platform, or partner with us to capture domain-specific training data for your application.